Moz Q&A is closed.

After more than 13 years, and tens of thousands of questions, Moz Q&A closed on 12th December 2024. Whilst we’re not completely removing the content - many posts will still be possible to view - we have locked both new posts and new replies. More details here.

Dynamically-generated .PDF files, instead of normal pages, indexed by and ranking in Google

-

Hi,

I come across a tough problem. I am working on an online-store website which contains the functionlaity of viewing products details in .PDF format (by the way, the website is built on Joomla CMS), now when I search my site's name in Google, the SERP simply displays my .PDF files in the first couple positions (shown in normal .PDF files format: [PDF]...)and I cannot find the normal pages there on SERP #1 unless I search the full site domain in Google. I really don't want this! Would you please tell me how to figure the problem out and solve it. I can actually remove the corresponding component (Virtuemart) that are in charge of generating the .PDF files. Now I am trying to redirect all the .PDF pages ranking in Google to a 404 page and remove the functionality, I plan to regenerate a sitemap of my site and submit it to Google, will it be working for me? I really appreciate that if you could help solve this problem. Thanks very much.

Sincerely

SEOmoz Pro Member

-

Recently discovered this:

Indicate the canonical version of a URL by responding with the

Link rel="canonical"HTTP header. Addingrel="canonical"to theheadsection of a page is useful for HTML content, but it can't be used for PDFs and other file types indexed by Google Web Search. In these cases you can indicate a canonical URL by responding with theLink rel="canonical"HTTP header, like this (note that to use this option, you'll need to be able to configure your server).Link: <http: www.example.com="" downloads="" white-paper.pdf="">; rel="canonical"</http:>

Google currently supports these link header elements for Web Search only.

-http://support.google.com/webmasters/bin/answer.py?hl=en&answer=139394

-

I would consider either excluding the PDFs from the index with your robots.txt in conjunction with resubmitting your sitemap (which you're all over), or placing a text link at the bottom of each PDF pointing back to the HTML version of that page (which, all things being equal, should cause the HTML version of the page to rank instead). I am not sure about serving 404 headers to Google instead of the PDFs that are currently in the index. Why not 301 to the HTML version of each PDF? Obviously that can't be a permanent solution, as you will eventually want to restore the functionality to users, right? But it will tell Googlebot that the content of each PDF is to be found from here on out at the URL containing the HTML version. This is a case where it would be handy to serve one thing to the bots and another to the human viewers, but I am afraid that doing so could get you into trouble.

I am interested in your case though—let us know what, if anything besides the 404s and sitemap resubmittal, you end up trying and what happens with it. I'm also curious to know what other mozzers suggest.

Got a burning SEO question?

Subscribe to Moz Pro to gain full access to Q&A, answer questions, and ask your own.

Browse Questions

Explore more categories

-

Moz Tools

Chat with the community about the Moz tools.

-

SEO Tactics

Discuss the SEO process with fellow marketers

-

Community

Discuss industry events, jobs, and news!

-

Digital Marketing

Chat about tactics outside of SEO

-

Research & Trends

Dive into research and trends in the search industry.

-

Support

Connect on product support and feature requests.

Related Questions

-

Page disappears from Google search results

Hi, I recently encountered a very strange problem.

Technical SEO | | JoelssonMedia

One of the pages I published in my website ranked very well for a couple of days on top 5, then after a couple of days, the page completely vanished, no matter how direct I search for it, does not appear on the results, I check GSC, everything seems to be normal, but when checking Google analytics, I find it strange that there is no data on the page since it disappeared and it also does not show up on the 'active pages' section no matter how many different computers i keep it open. I have checked to page 9, and used a couple of keyword tools and it appears nowhere! It didn't have any back links, but it was unique and high quality. I have checked on the page does still exist and it is still readable. Has this ´happened to anyone before? Any thoughts would be gratefully received.0 -

Removing a site from Google index with no index met tags

Hi there! I wanted to remove a duplicated site from the google index. I've read that you can do this by removing the URL from Google Search console and, although I can't find it in Google Search console, Google keeps on showing the site on SERPs. So I wanted to add a "no index" meta tag to the code of the site however I've only found out how to do this for individual pages, can you do the same for a entire site? How can I do it? Thank you for your help in advance! L

Technical SEO | | Chris_Wright1 -

Google has deindexed a page it thinks is set to 'noindex', but is in fact still set to 'index'

A page on our WordPress powered website has had an error message thrown up in GSC to say it is included in the sitemap but set to 'noindex'. The page has also been removed from Google's search results. Page is https://www.onlinemortgageadvisor.co.uk/bad-credit-mortgages/how-to-get-a-mortgage-with-bad-credit/ Looking at the page code, plus using Screaming Frog and Ahrefs crawlers, the page is very clearly still set to 'index'. The SEO plugin we use has not been changed to 'noindex' the page. I have asked for it to be reindexed via GSC but I'm concerned why Google thinks this page was asked to be noindexed. Can anyone help with this one? Has anyone seen this before, been hit with this recently, got any advice...?

Technical SEO | | d.bird0 -

Wrong page title in Google

Hi there, A while ago we took over the domain www.hoesjes.nl and forwarded it to our website www.telefoonhoesjesxl.nl. If you perform a search for the keyword 'hoesjes' in Google then we (www.telefoonhoesjesxl.nl) show up on an organic number 1 position. The problem is that the page title isn't correct. Google shows the page title of the website hoesjes.nl we took over and (correctly?) redirected to our domain www.telefoonhoesjesxl.nl. Does anybody have any idea how to get rid of this wrong page title in Google?

Technical SEO | | MarcelMoz

Here you can find a screenshot of what I mean. Thanks! Marcel0 -

Why is Google Webmaster Tools showing 404 Page Not Found Errors for web pages that don't have anything to do with my site?

I am currently working on a small site with approx 50 web pages. In the crawl error section in WMT Google has highlighted over 10,000 page not found errors for pages that have nothing to do with my site. Anyone come across this before?

Technical SEO | | Pete40 -

Image Indexing Issue by Google

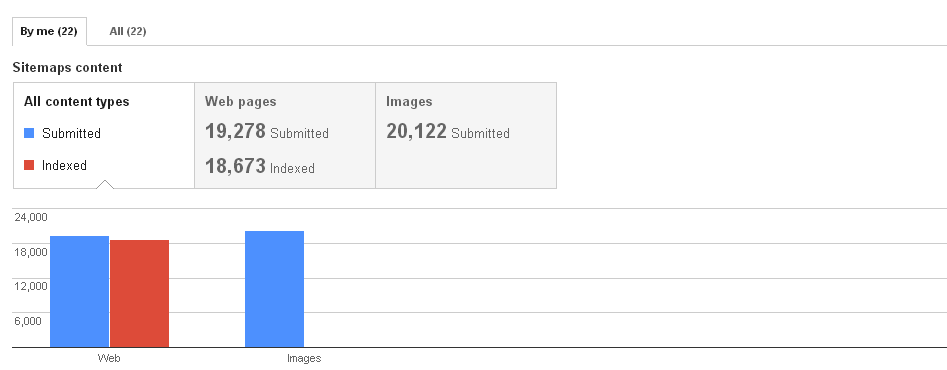

Hello All,My URL is: www.thesalebox.comI have Submitted my image Sitemap in google webmaster tool on 10th Oct 2013,Still google could not indexing any of my web images,Please refer my sitemap - www.thesalebox.com/AppliancesHomeEntertainment.xml and www.thesalebox.com/Hardware.xmland my webmaster status and image indexing status are below,

Technical SEO | | CommercePundit Can you please help me, why my images are not indexing in google yet? is there any issue? please give me suggestions?Thanks!

0

Can you please help me, why my images are not indexing in google yet? is there any issue? please give me suggestions?Thanks!

0 -

Google ranking my site abroad, how to stop?

Hi Mozzers, I have a UK based ecommerce site, that sells only to the UK. Over the last month Google has started ranking my site on foreign flavours of Google, so I keep getting traffic coming to my site from Europe, America and the far east that we could never sell to, and as a result bounce is going up and engagement is going down. They are definitely coming to the site from google searches that relate to my product type, but in regions I do not service. Is there a way to stop google doing this? I have the target set to UK in WMT, but is there anything else I can do? I worried about my UK ranking being damaged by an increasing overall bounce rate. Thanks

Technical SEO | | FDFPres0