Content Issues: Duplicate Content

-

Hi there

Moz flagged the following content issues, the page has duplicate content and missing canonical tags.

What is the best solution to do?Industrial Flooring » IRL Group Ltd

https://irlgroup.co.uk/industrial-flooring/Industrial Flooring » IRL Group Ltd

https://irlgroup.co.uk/index.php/industrial-flooringIndustrial Flooring » IRL Group Ltd

https://irlgroup.co.uk/index.php/industrial-flooring/ -

Duplicate content refers to identical or substantially similar content found on multiple web pages. Search engines like Google penalize websites with duplicate content as it can confuse their algorithms and provide a poor user experience. To avoid this issue, website owners should regularly audit their content, use canonical tags to indicate preferred URLs, and avoid scraping or copying content from other sites. Creating unique, valuable content tailored to the target audience not only improves search engine rankings but also enhances credibility and user engagement.

-

@Kingagogomarketing said in Content Issues: Duplicate Content:

Moz flagged the following content issues, the page has duplicate content and missing canonical tags.

What is the best solution to do?To address the flagged content issues of duplicate content and missing canonical tags, here are some recommended solutions:

Identify and Resolve Duplicate Content:

Use tools like Screaming Frog or Siteliner to identify duplicate content across your website.

Once identified, consolidate duplicate content by either:

Updating the content to make it unique and valuable.

Setting up 301 redirects to redirect duplicate URLs to the original (canonical) URL.

Implementing canonical tags to specify the preferred version of the content (if applicable).

Ensure that each page on your website provides unique and valuable content to users and search engines.

Implement Canonical Tags:Canonical tags (rel="canonical") are HTML elements that indicate the preferred version of a webpage when multiple versions of the same content exist (e.g., due to parameter variations, session IDs, or URL parameters).

Add canonical tags to the head section of each webpage, specifying the canonical URL of the page. For example:

html

Copy code

<link rel="canonical" href="https://www.example.com/page">

Ensure that the canonical URL points to the preferred version of the content and is consistent across all versions of the page.

Monitor and Maintain:Regularly monitor your website for duplicate content issues and ensure that canonical tags are correctly implemented.

Conduct periodic audits to identify and address any new instances of duplicate content or missing canonical tags.

Stay informed about best practices for managing duplicate content and canonicalization, as search engine algorithms and guidelines may evolve over time.

By implementing these solutions, you can effectively address duplicate content issues and ensure that canonical tags are properly utilized, improving the overall quality and performance of your website in terms of SEO and user experience. -

To resolve the flagged content issues, first identify and remove duplicate content on the page. Then, ensure that canonical tags are added appropriately to indicate the preferred version of the content. Regularly monitor and update the content to maintain its uniqueness and relevancy. Lastly, utilize tools like Moz to continually check for any new issues and address them promptly to maintain SEO integrity.

-

All of the SEO work must always be "white hat", and follow Google's E-EAT, so, remove any duplicated text, replace it with well written text, this is how we got a business, selling Bristol garden offices, onto the first page of Google.

-

@ModernPlace

Thank you for your help!!

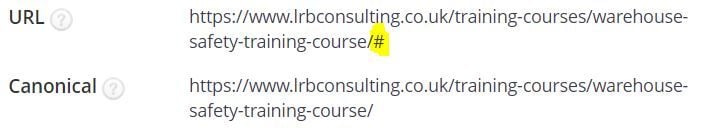

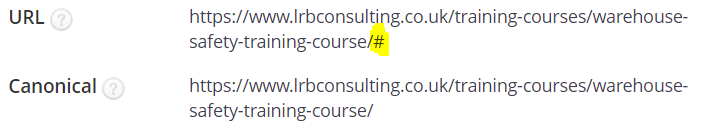

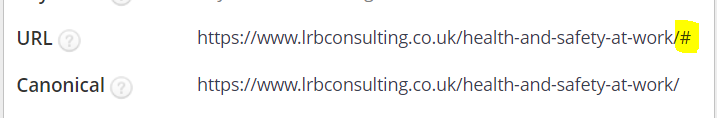

Hope you get my previous message.All my main category and subcategory pages have rel=canonicals URLs.

How to get rid of index.php pages?

That URL is odd

My main categories and subcategories contains rel =canonical URL

-

@ModernPlace

Thank you, for your help.My primary pages contain rel=canonicals URLs also subcategories.

How to get rid of index.php/ URLs?

This one is superb odd

-

@techmaniacc

Thank you for your help!

It's important to choose the best possible canonical URL for duplicate content to ensure that search engines understand which page wants to be the main source of information for that particular content. Google does suggest a canonical version of the URL in the Search Console, but it's important to keep in mind that the suggestion might not always be the best choice for the website, so it's always a good idea to verify and confirm that the suggested canonical URL is indeed the best choice for the site. How to do that?

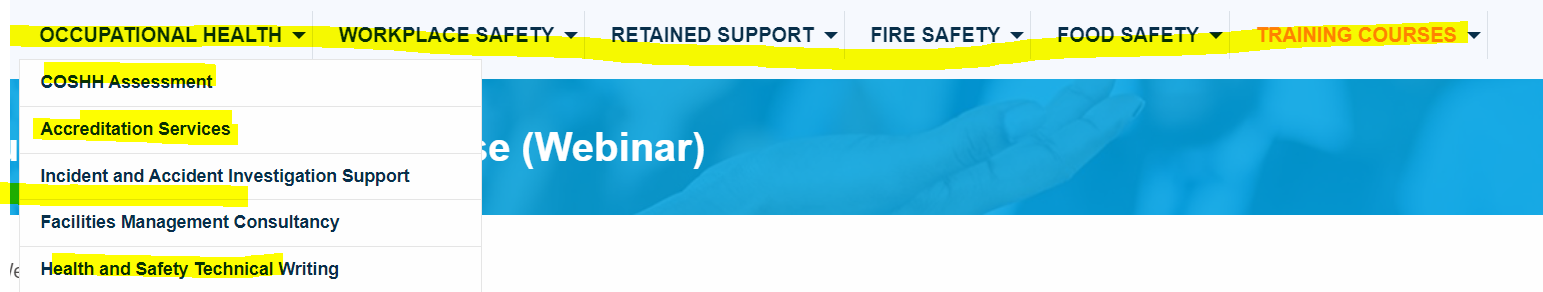

All of these main categories contain canonicals URL, the only strange looking like is the pageretained support. This is unbelievable I checked the main categories and subcategories all of them have rel=canonicals!?

-

It does seem like you have duplicate content.

- You don't want index.php in your link structure (it's useless for SEO)

- You need to set a canonical tag for your primary page and URL structure

- Remove the other instances so there is no duplicate content.

-

@Kingagogomarketing

Hello, adding rel=canonical tags to the duplicate pages and specifying which is the preferred URL for search engines is the best solution for the issues with duplicate content and missing canonical tags. In order to prevent penalties for duplicate content, this will let search engines know which version of the content to index. Additionally, be sure that each page contains original, excellent material. Keep up with the latest SEO updates by visiting https://www.head45.com/google-algorithm-updates/ for more information.

Got a burning SEO question?

Subscribe to Moz Pro to gain full access to Q&A, answer questions, and ask your own.

Browse Questions

Explore more categories

-

Moz Tools

Chat with the community about the Moz tools.

-

SEO Tactics

Discuss the SEO process with fellow marketers

-

Community

Discuss industry events, jobs, and news!

-

Digital Marketing

Chat about tactics outside of SEO

-

Research & Trends

Dive into research and trends in the search industry.

-

Support

Connect on product support and feature requests.

Related Questions

-

Subdirectory site / 301 Redirects / Google Search Console

Hi There, I'm a web developer working on an existing WordPress site (Site #1) that has 900 blog posts accessible from this URL structure: www.site-1.com/title-of-the-post We've built a new website for their content (Site #2) and programmatically moved all blog posts to the second website. Here is the URL structure: www.site-1.com/site-2/title-of-the-post Site #1 will remain as a normal company site without a blog, and Site #2 will act as an online content membership platform. The original 900 posts have great link juice that we, of course, would like to maintain. We've already set up 301 redirects that take care of this process. (ie. the original post gets redirected to the same URL slug with '/site-2/' added. My questions: Do you have a recommendation about how to best handle this second website in Google Search Console? Do we submit this second website as an additional property in GSC? (which shares the same top-level-domain as the original) Currently, the sitemap.xml submitted to Google Search Console has all 900 blog posts with the old URLs. Is there any benefit / drawback to submitting another sitemap.xml from the new website which has all the same blog posts at the new URL. Your guidance is greatly appreciated. Thank you.

Intermediate & Advanced SEO | | HimalayanInstitute0 -

Duplicate content

Dear community, We have 15 product specific landing pages. They all share a block called "Why invest in VanEck ETFs?", see e.g., https://www.vaneck.com/de/en/mining-etf https://www.vaneck.com/de/en/space-etf/ https://www.vaneck.com/de/en/esports-etf/

Content Development | | marketing-europe

Can this lead to SEO penalization because of duplicate content?0 -

Plagiarized Site Effecting Google Rankings

Can someone provides insights on a de-indexing example? I have gone through the depths of Google lack of support and requesting duplicate content flags, so no avail. Here's the scenario: Client had a competing SEO provider try to earn his business. In doing so, he copied word for word our blog that we have been producing content on over the last 5 years. He also integrated Google reviews in the structured data on this new URL. Well, fast forward 1-2 months later, our rankings started to drop. We found this 100% plagiarized site is taking away from our keyword rankings on GMB, and is no and Google search, and our site GMB is now only displaying on a branded name search as well as our search traffic has dropped. I have identified the plagiarized, duplicated content, being tied to our GMB as well, as the source of the problem. Well, I finally obtain ed control of the plagarized domain and shut down the hosted, and forwarded the URL to our URL. Well, Google still has the HTTS version of the site indexed. And it is in my professional opinion, that since the site is still indexed and is associated with the physician GMB that was ranking for our target keyword and no longer does, that this is the barrier to ranking again. Since its the HTTPS version, it is not forwarded to our domain. Its a 504 error but is still ranking in the google index. The hosting and SSL was canceled circa December 10th. I have been waiting for Google to de-index this site, therefore allowing our primary site to climb the rankings and GMB rankings once again. But it has been 6 weeks and Google is still indexing this spam site. I am incredibly frustrated with google support (as a google partner) and disappointed that this spam site is still indexed. Again, my conclusion that when this SPAM site is de-indexed, we will return back to #1. But when? and at this point, ever? Highlighted below is the spam site. Any suggestions? Capture.PNG

SEO Tactics | | WebMarkets0 -

Duplicate Content - Different URLs and Content on each

Seeing a lot of duplicate content instances of seemingly unrelated pages. For instance, http://www.rushimprint.com/custom-bluetooth-speakers.html?from=topnav3 is being tracked as a duplicate of http://www.rushimprint.com/custom-planners-diaries.html?resultsperpg=viewall. Does anyone else see this issue? Is there a solution anyone is aware of?

Technical SEO | | ClaytonKendall0 -

Duplicate Content Question

I have a client that operates a local service-based business. They are thinking of expanding that business to another geographic area (a drive several hours away in an affluent summer vacation area). The name of the existing business contains the name of the city, so it would not be well-suited to market 'City X' business in 'City Y'. My initial thought was to (for the most part) 'duplicate' the existing site onto a new site (brand new root domain). Much of the content would be the exact same. We could re-word some things so there aren't entire lengthy paragraphs of identical info, but it seems pointless to completely reinvent the wheel. We'll get as creative as possible, but certain things just wouldn't change. This seems like the most pragmatic thing to do given their goals, but I'm worried about duplicate content. It doesn't feel as though this is spammy though, so I'm not sure if there's cause for concern.

Technical SEO | | stevefidelity0 -

Looking for a technical solution for duplicate content

Hello, Are there any technical solutions to duplicate content similar to the nofollow tag? A tag which can indicate to Google that we know that this is duplicate content but we want it there because it makes sense to the user. Thank you.

Technical SEO | | FusionMediaLimited0 -

Duplicate content by category name change

Hello friends, I have several problems with my website related with duplicate content. When we changed any family name, for example "biodiversidad" to "cajas nido y biodiversidad", it creates a duplicate content because: mydomain.com/biodiversidad and mydomain.com/cajas-nido-y-biodiversidad have the same content. This happens every tame I change the names of the categories or families. To avoid this, the first thing that comes to my mid is a 301 redirect from the old to the new url, but I wonder if this can be done more automatically otherwise, maybe a script? Any suggestion? Thank you

Technical SEO | | pasape0 -

The Bible and Duplicate Content

We have our complete set of scriptures online, including the Bible at http://lds.org/scriptures. Users can browse to any of the volumes of scriptures. We've improved the user experience by allowing users to link to specific verses in context which will scroll to and highlight the linked verse. However, this creates a significant amount of duplicate content. For example, these links: http://lds.org/scriptures/nt/james/1.5 http://lds.org/scriptures/nt/james/1.5-10 http://lds.org/scriptures/nt/james/1 All of those will link to the same chapter in the book of James, yet the first two will highlight the verse 5 and verses 5-10 respectively. This is a good user experience because in other sections of our site and on blogs throughout the world webmasters link to specific verses so the reader can see the verse in context of the rest of the chapter. Another bible site has separate html pages for each verse individually and tends to outrank us because of this (and possibly some other reasons) for long tail chapter/verse queries. However, our tests indicated that the current version is preferred by users. We have a sitemap ready to publish which includes a URL for every chapter/verse. We hope this will improve indexing of some of the more popular verses. However, Googlebot is going to see some duplicate content as it crawls that sitemap! So the question is: is the sitemap a good idea realizing that we can't revert back to including each chapter/verse on its own unique page? We are also going to recommend that we create unique titles for each of the verses and pass a portion of the text from the verse into the meta description. Will this perhaps be enough to satisfy Googlebot that the pages are in fact unique? They certainly are from a user perspective. Thanks all for taking the time!

Technical SEO | | LDS-SEO0