Is there a tool to figure out bad backlinks

-

With the new changes to the google algorithm. I'm trying to figure out what links google may think are hurting my site. Any thoughts? Thanks

-

I see.

I would presume that these guys have been penalised then. It may be that they are now trying to reindex their site and are still appearing in the listings but much lower now.

There isnt really a definite way of knowing if they have been banned, but as good practise, I would try and get any URL removed from a website that I had any doubts about.

You could maybe try contacting the Google Webmaster team to confirm this, but I don't know how useful they will be. Then again, its worth a try at least.

Matt.

-

Hi Matt,

The sites I believe might have a problem are still showing up in the results like that. However, where they might have ranked the first page before, some of the blog sites that we link with are not in the top 100 results anymore. Strange...

-

Hi Matt,

The sites I believe might have a problem are still showing up in the results like that. However, where they might have ranked the first page before, some of the blog sites that we link with are not in the top 100 results anymore. Strange...

-

Hi Morgan,

The website will show in Google if you do a site:www.domain.com search.

If you searched for 'domain' (replace this with their website name, i.e. seo moz) and they don't show up in the top few listings thn you can be pretty sure that they have been banned.

As soon as this is the case, I would either contact the webmaster or manually delete the link if you can.

Good luck!

Matt.

-

Hi Matt,

Thanks for the help. If the site is still showing page results that is linking to you, that means it is not banned? I see a few that I have on blog rolls, but that site still shows 1500 results with google.

-

Hi Morgan,

Unfortunately, there isn't a quick way to do this.

What I have done is use Open Site Explorer and downloaded the .csv file of all the linking domains to my website.

Now that I have them in a spreadsheet, it is a bit easier to filter through them. I have been drilling down on links from blogs with a particularly low PA or DA. Then just doing the hard task of checking to see if they have individually been banned by Google. You can do this by searching for their domain to see if they appear.

This is a slow process, but better safe than sorry, eh?

Hope this helps.

Matt.

Got a burning SEO question?

Subscribe to Moz Pro to gain full access to Q&A, answer questions, and ask your own.

Browse Questions

Explore more categories

-

Moz Tools

Chat with the community about the Moz tools.

-

SEO Tactics

Discuss the SEO process with fellow marketers

-

Community

Discuss industry events, jobs, and news!

-

Digital Marketing

Chat about tactics outside of SEO

-

Research & Trends

Dive into research and trends in the search industry.

-

Support

Connect on product support and feature requests.

Related Questions

-

Exposure from backlinks for job posting URL. Will soon expire, how best to keep the backlink juice?

Hi All, First post and apologies if this seems obvious. I run a niche jobs board and recently one of our openings was shared online quite heavily after a press release. The exposure has been great but my problem is the URL generated for the job post will soon expire. I was wondering the best way to keep the "link juice" as I can't extend the post indefinitely as the job has been filled. Would a 301 redirect work best in this case? Thanks in advance for the info!

Technical SEO | | MartinAndrew0 -

URL Inspector, Rich Results Tool, GSC unable to detect Logo inside Embedded schema

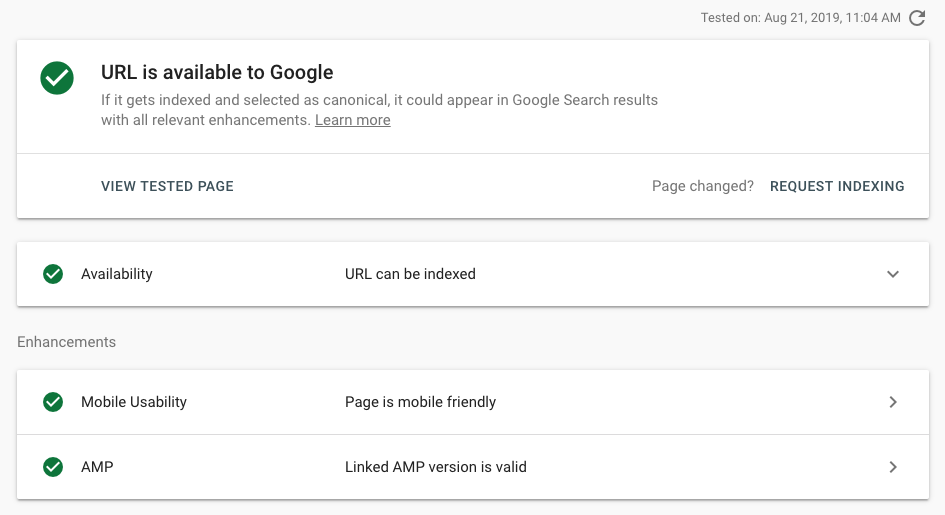

I work on a news site and we updated our Schema set up last week. Since then, valid Logo items are dropping like flies in Search Console. Both URL inspector & Rich Results test cannot seem to be able to detect Logo on articles. Is this a bug or can Googlebot really not see schema nested within other schema?Previously, we had both Organization and Article schema, separately, on all article pages (with Organization repeated inside publisher attribute). We removed the separate Organization, and now just have Article with Organization inside the publisher attribute. Code is valid in Structured Data testing tool but URL inspection etc. cannot detect it. Example: https://bit.ly/2TY9Bct Here is this page in URL inspector:

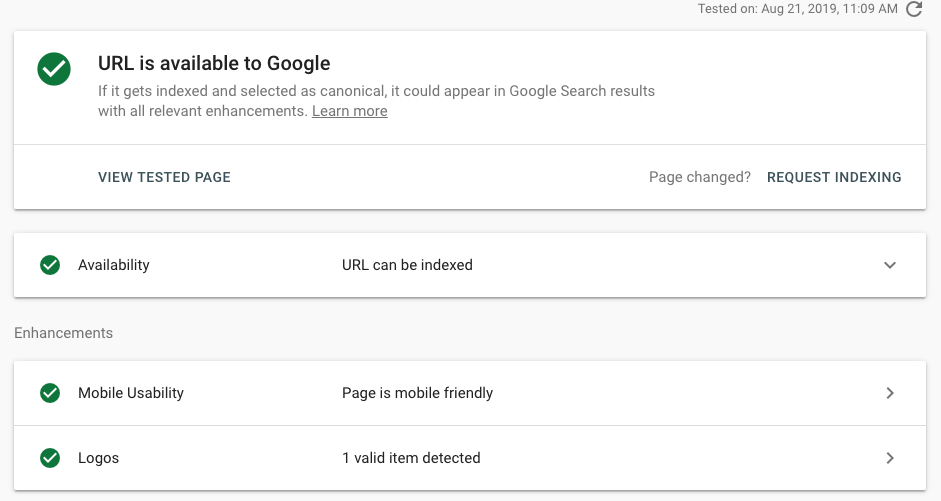

Technical SEO | | ValnetInc By comparison, we also have Organization schema (un-nested) on our homepage. Interestingly enough, the tools can detect that no problem. That's leading me to believe that either nested schema is unreadable by Googlebot OR that this is not an accurate representation of Googlebot and it's only unreadable by the testing tools. Here is the homepage in URL inspector:

By comparison, we also have Organization schema (un-nested) on our homepage. Interestingly enough, the tools can detect that no problem. That's leading me to believe that either nested schema is unreadable by Googlebot OR that this is not an accurate representation of Googlebot and it's only unreadable by the testing tools. Here is the homepage in URL inspector:  In pseudo-code, our OLD schema looked like this: The NEW schema set up has the same Article schema set up, but the separate script for Organization has been removed. We made the change to embed our schema for a couple reasons: first, because Google's best practices say that if multiple schemas are used, Google will choose the best one so it's better to just have one script; second, Google's codelabs tutorial for schema uses a nested structure to indicate hierarchy of relevancy to the page. My question is, does nesting schemas like this make it impossible for Googlebot to detect a schema type that's 2 or more levels deep? Or is this just a bug with the testing tools?

0

In pseudo-code, our OLD schema looked like this: The NEW schema set up has the same Article schema set up, but the separate script for Organization has been removed. We made the change to embed our schema for a couple reasons: first, because Google's best practices say that if multiple schemas are used, Google will choose the best one so it's better to just have one script; second, Google's codelabs tutorial for schema uses a nested structure to indicate hierarchy of relevancy to the page. My question is, does nesting schemas like this make it impossible for Googlebot to detect a schema type that's 2 or more levels deep? Or is this just a bug with the testing tools?

0 -

Word mentioned twice in URL? Bad for SEO?

Is a URL like the one below going to hurt SEO for this page? /healthcare-solutions/healthcare-identity-solutions/laboratory-management.html I like the match the URL and H1s as close as possible but in this case it looks a bit funky. /healthcare-solutions/healthcare-identity-solutions/laboratory-management.html

Technical SEO | | jsilapas0 -

Structured Data verses Data Highlighter Tool

Hello, I would like to implement structured data on a site, and I am a newbie in this. (I know, hard to believe considering it's 2015.) I'm slightly confused on why would I use structured data, if I can just tag the different elements with the data highlighter tool in Google Webmaster Tools. Should I focus on one or the other? The sites I would be working on would be considered a "local business" and have the basics (i.e. phone, address, hours, etc).

Technical SEO | | sumoleap0 -

When i type site:jamalon.com to discover number of pages indexed it gives me different result from google web master tools

when i type site:jamalon.com to discover number of pages indexed it gives me different result from google web master tools

Technical SEO | | Jamalon0 -

Google Webmaster Tools : no data available

Hi guys I have a website which is 2 years old. Since 03/01/2013 I have no data in Google Webmaster Tools > Trafic > Search queries. The queries, the impressions and the clics dropped suddenly from one day to the next. I checked the rank of my keywords and the traffic of my site. They are stable and didn't move which means that they don't cause the problem. Has anybody had the same problem ? Is it Google Webmaster Tools bug ? Many thanks.

Technical SEO | | PFX1110 -

Setting a geographic target in webmaster tools

If a site is targeting traffic from around the world should I set the geographic targeting in webmaster tools under 'settings' or leave it? Any help would be much appreciated!

Technical SEO | | SamCUK0 -

Backlinks to home page vs internal page

Hello, What is the point of getting a large amount of backlinks to internal pages of an ecommerce site? Although it would be great to make your articles (for example) strong, isn't it more important to build up the strength of the home page. All of My SEO has had a long term goal of strengthening the home page, with just enough backlinks to internal pages to have balance, which is happening naturally. The home page of our main site is what comes up on tons of our keyword searches since it is so strong. Please let me know why so much effort is put into getting backlinks to internal pages. Thank you,

Technical SEO | | BobGW0